6 min read

How are you building your own software?

That is the question you should be asking every Gen AI tool vendor. Not which benchmark they won. Not which model they run. How are they building their own product?

Every Gen AI tool vendor is building their own software with Gen AI agents. They hit bottlenecks in code review, in governance, in deployment velocity. They redesigned their SDLC from the inside out. So why are they not transparent about that? Why are you getting a product demo instead of a conversation about how they reorganized their own engineering teams and what they learned when their existing processes broke?

How they answer that question tells you more than any benchmark ever will.

Nobody asks it. And that is how you end up like David.

David is a CTO at a payments company. He called me on a Thursday afternoon, which was unusual. When David calls instead of texts, something is stuck.

“I just got out of steering committee,” he said. “I asked my team a simple question. Can someone tell me what our governance model actually is right now?” He paused. “Nobody could answer.”

The VP of Engineering said they were following the vendor’s recommended practices. The security team said each tool had a different data residency posture and they were reviewing all three independently. The CFO asked how much they were spending across the three contracts combined.

Nobody had the number.

David did not have a tool selection problem. The tools worked. All three of them worked. His bottleneck was in his governance model, his SDLC, his organizational design. Three tools meant three approval surfaces, three compliance postures, three security reviews, and zero coherent operating model underneath any of it. One tool means one attack surface, one audit trail, one set of contractual controls.

David had spent six months comparing tools. He should have spent six months fixing his organization.

The top Gen AI coding tools (and which ones are on top changes every week) are close enough in raw capability that the performance delta between them is not your decision driver. Evaluate them seriously, but do it fast, because the question that actually matters is not which tool scores highest on your rubric. The question is which vendor will help you.

Which one will sit in your steering committee and help you redesign your governance model? Which one will coach your executives on how to actually build in a world where agents write the first draft? Pick the one you feel most comfortable building a relationship with. Not the one that won the benchmark this quarter. The one you trust to be in the room when the organizational change gets hard.

The 2028 problem you are creating is not picking the wrong tool. It is spending 2025 and 2026 agonizing over tool selection while your SDLC remains unchanged and your governance model sits in a slide deck nobody follows.

What you need from the vendor is not training for your teams. Training is your job.

What you need is help getting your executive team to adopt an AI-native SDLC. The governance model has to change. The way you review, approve, and deploy code has to change. The roles are changing. What an AI-native engineer looks like is not what a traditional engineer looked like, and your HR team needs to rethink job descriptions, compensation models, and career ladders accordingly.

Even when I am in these conversations, I challenge the executives. I tell them: I could answer every single one of your developers’ AI questions. I could sit here and be the expert in the room indefinitely. But is that what you are trying to build? Are you trying to build an internal capability, or are you trying to keep me engaged forever? The answer is always the first one. And the moment they say it out loud, the conversation shifts. It stops being “teach our teams how to use the tool” and becomes “help us adopt the capability so we do not need you anymore.”

That is the right conversation. The tool is a commodity. The organizational adoption expertise is not.

Structure the engagement with defined scope, a 90-day milestone, and an exit plan that transfers ownership to your internal team. The right vendor will welcome those constraints because they know the engagement should make itself unnecessary.

Now imagine your developers are writing code three times faster. What happens to the rest of the value stream when the coding bottleneck disappears?

The bottleneck moves.

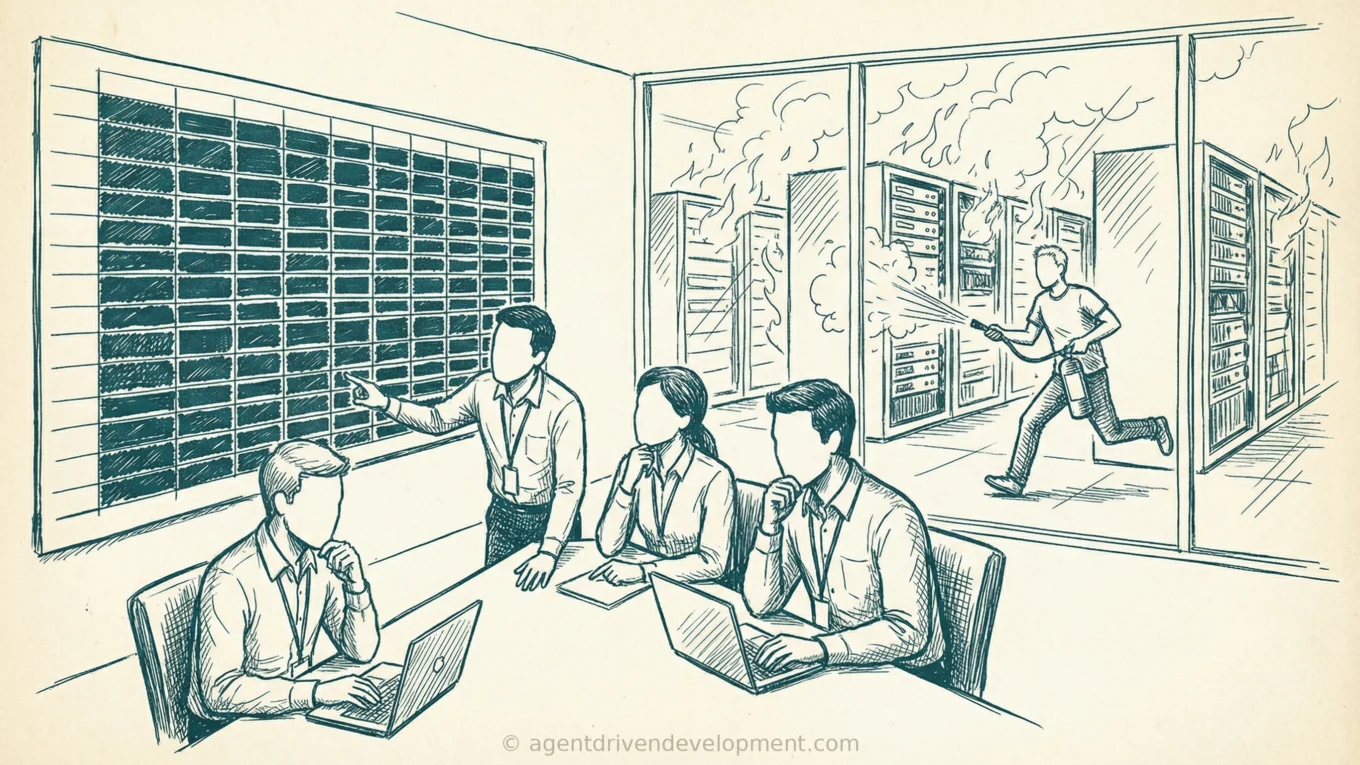

Usually to code review first, then to whatever approval gate your organization has bolted onto the deployment pipeline over the years. The new bottleneck is absorption, not capability. Bottlenecks do not disappear; they migrate.

By the time you chase every bottleneck in your software factory sequentially, it could be years. You fix code review and the constraint moves to deployment. You fix deployment and it moves to architecture approval. You are playing whack-a-mole with your own organization.

That is why you should consider something more drastic. Instead of optimizing the existing organization one constraint at a time, build the parallel organization. A small team that operates under an entirely new operating model while the rest of the company runs the old one. Kotter wrote about the dual operating system. Moore wrote about Zone Management. The pattern is the same: you do not transform the existing organization, you build a new one next to it and let the results speak for themselves.

The tool accelerates the coding. The SDLC determines whether the rest of the pipeline can absorb what the tool produces. If your governance model, your review process, and your organizational structure have not changed, you have a faster engine bolted onto the same chassis.

Picking the wrong tool is recoverable. Switching means unwinding some training and CI/CD integration. It is also a credibility cost; you told the board this was the strategy, and now you are changing it. I have had that conversation. The political capital is not fun to spend.

But the migration itself is recoverable. The organizational learning transfers even if the specific tool does not.

Never picking is not recoverable. The SDLC redesign you deferred does not happen retroactively. The competitive gap you allowed to open does not close because you eventually made a decision.

David consolidated to one tool in Q4. But that is not the story. The story is what happened after.

It was not clean. His senior architects pushed back hard on the tool he chose. Two of them had built internal tooling on top of a different vendor’s API and did not want to throw it away. David almost reversed the decision in week three. He did not. He gave the architects a 30-day window to port their tooling and told them the decision was final.

The governance cleanup freed up his security team to focus on the architecture work they had been deferring for two quarters. The SDLC redesign cut their deployment cycle for their core payments API from three weeks to four days. The parallel org shipped its first production deployment in 47 days. His CFO, for the first time, could tell the board exactly what the AI investment was producing and exactly what it cost.

He texted me last month.

“Should have done this a year ago.”

Nobody regrets picking. They regret waiting. And the ones who got it right did not pick the best tool. They picked the vendor who helped them fix their organization.