8 min read

My daughter Lily is nearly five. Last Sunday over waffles, I told her: “Put the plate on the counter.”

She walked straight to the bathroom counter.

I almost got frustrated. Then I realized — she had just washed her hands there before breakfast. In her context, that was “the counter.” She executed the instruction perfectly. She just did not have my context.

So I changed my approach. Now I ask: “Lily, can you tell me the steps you are going to take to put that plate on the kitchen counter?”

If her plan involves climbing on the counter and swinging from the light fixture, we catch that before she starts. That is the investment. Taking two minutes upfront to build a spec and implementation plan together instead of hoping it works out and dealing with the mess after.

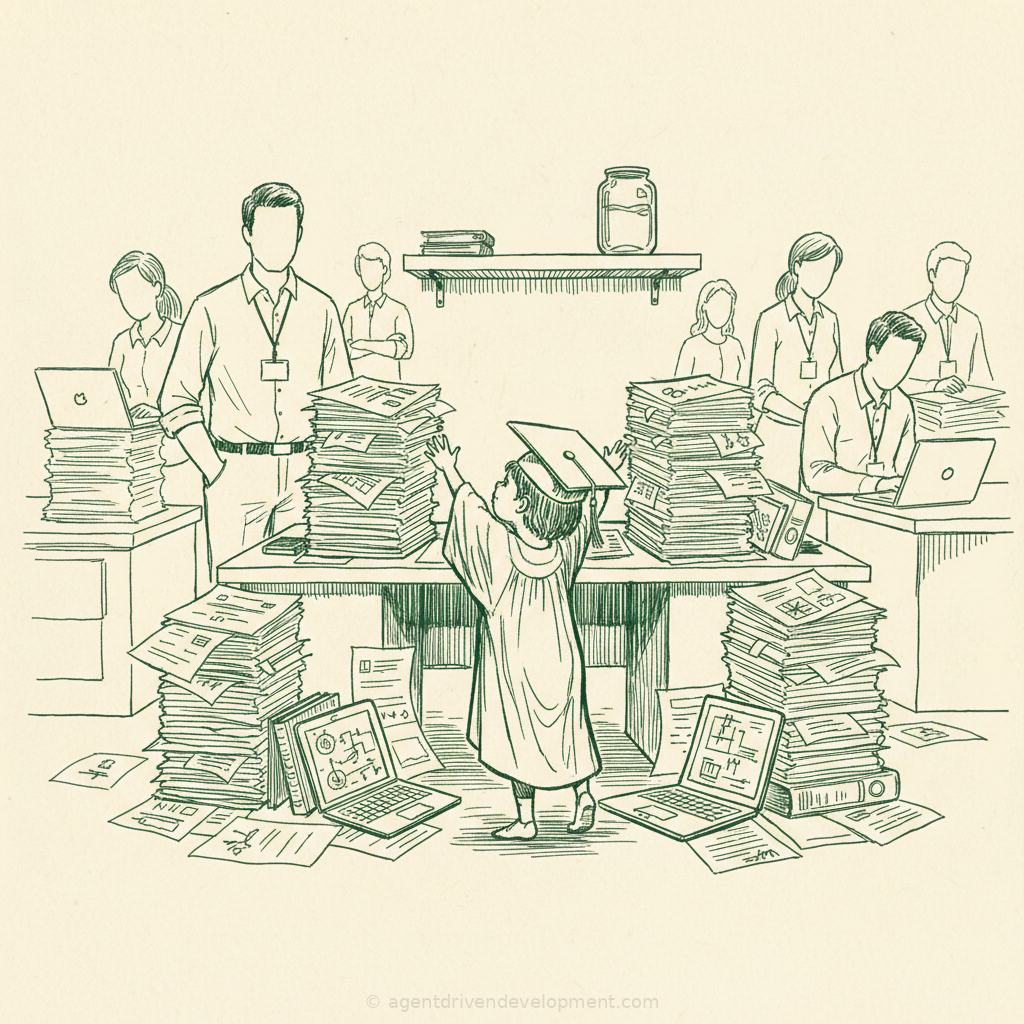

Now imagine Lily has every PhD and basically all of human knowledge in her head. That is your large language model.

You are giving billion-parameter models vaguer instructions than you give your kids

Here is what I see in every organization I work with. An engineer opens a chat with an AI agent and types: “Fix this code.” “Make this better.” “Optimize our process.” Three words. Maybe four. And then they are surprised when the output misses the mark.

Think about what you would do if a junior developer walked up to your desk and said “fix this code.” You would ask seventeen follow-up questions. What is broken? What does “fixed” look like? What are the constraints? What can you not touch? What is the timeline? You would never hand someone a function with no context and say “make it better” and expect them to read your mind.

But that is exactly what most teams do with AI agents. Every single day.

I watched a senior developer last month spend forty-five minutes fighting with an agent over a database migration. The agent kept suggesting approaches that would have worked beautifully — on a greenfield project. But this was a twelve-year-old system with three undocumented foreign key constraints, a reporting pipeline that ran nightly against raw tables, and a compliance requirement that no column could be dropped without a ninety-day deprecation window. The agent did not know any of that. The developer never told it.

After forty-five minutes of frustration, he said: “This thing is useless.”

The thing was not useless. The thing had no context. That is not the same problem, but it feels identical from the inside.

Knowledge is not judgment

Lily knows what a counter is. She knows where counters are in our house. She even knows that plates go on counters. What she does not have is the judgment to disambiguate which counter I mean based on the situation — we just finished breakfast, so obviously I mean the kitchen counter. That “obviously” is twenty years of adult context compressed into a single word. She is four. She does not have it yet.

Your AI agent has the same gap, and it is bigger than most engineering leaders realize. The agent knows every design pattern, every framework, every algorithm. It has read more code than your entire team will write in their careers. But it does not know that your billing service has a race condition when two invoices close in the same millisecond. It does not know that the function it is looking at was written to work around a vendor API bug that has been there since 2019 and that three enterprise customers depend on. It does not know that the last time someone “optimized” that query, the reporting team lost two days of data and your VP of Sales called your CTO at eleven at night.

That is not a knowledge problem. That is a context problem. And context is what separates a five-year-old with every PhD from a senior engineer who has been in your codebase for three years.

The five-year-old will give you the textbook answer every time. The senior engineer will give you the answer that does not break production.

The real failure mode is not wrong answers

Here is the part nobody talks about. The dangerous output from an AI agent is not the obviously wrong answer. You catch those. The dangerous output is the answer that looks right, passes review, and breaks something three weeks later that nobody connects back to the change.

I have seen this happen four times in the last two months across organizations I work with. The pattern is always the same. An agent generates a clean, well-structured solution. It passes tests. It looks good in code review — better than what most humans would have written, honestly. And it ships. Then three weeks later, an edge case surfaces that the agent could not have known about because the edge case lives in tribal knowledge, not in the codebase.

One team had an agent refactor a payment processing module. Beautiful code. Clean separation of concerns. Textbook SOLID. The agent even wrote comprehensive tests. What the agent did not know was that the original code had a specific ordering dependency with a downstream fraud detection service. The original developer had left a comment about it, but it was in a different file, in a different repository, three years old. The agent produced better code that broke a system it did not know existed.

That is not an AI failure. That is an organization that has not figured out how to give an AI agent the context it needs to operate safely in their specific environment.

The plate goes on the kitchen counter

Here is what actually works. I do this with Lily and I do this with AI agents and the principle is identical.

Give context first. Not after the first bad output. Before any output. “We are in the payment processing module. The goal is cutting latency without breaking PCI compliance. This service has a downstream dependency on the fraud detection pipeline that expects events in a specific order. Do not change the event emission sequence.”

Ask for the plan before the code. “Walk me through your approach before you write anything.” This is the Lily technique. If the plan involves swinging from the light fixture, you catch it in thirty seconds instead of debugging it for an hour. The engineers who skip this step are the ones who spend their afternoons undoing what the agent built in the morning.

Iterate with specifics. Not “this is wrong, try again.” That is the equivalent of telling Lily “not that counter” without telling her which counter. Instead: “The caching approach works, but this data has a four-hour freshness requirement because of regulatory reporting. Adjust the TTL and add a cache invalidation hook on the nightly batch job.”

Save the patterns that work. When you find an approach that produces consistently good output for your codebase — the context preamble, the constraint list, the verification steps — document it. Make it your team’s standard. This is not prompt engineering. This is specification architecture, and it is the skill that separates teams getting ten-times productivity from teams getting ten-times frustration.

This is not a tools problem

I want to be direct about this because the industry conversation is pointed in the wrong direction. The discourse is about which model is better, which IDE integration is faster, which agent framework has the best benchmarks. None of that matters if your team cannot articulate what they need.

The engineers who struggle with AI agents are not struggling because the tools are bad. They are struggling because they have spent their entire careers in an environment where context was ambient. You sat next to the person who knew about the fraud detection dependency. You overheard the conversation about the vendor API bug. You were in the meeting where the VP of Sales lost it about the reporting data. That context lived in the air. You absorbed it without trying.

AI agents do not sit in your meetings. They do not overhear your Slack channels. They do not have three years of institutional memory. Every piece of context you want them to have, you need to give them explicitly. That is a skill. It is a new skill. And most teams have not built it yet.

The culture change here matters more than the technology budget. You can buy the best model on the market and your team will still put the plate on the bathroom counter if nobody teaches them to specify which counter they mean.

What this means for your organization

Your team needs to become context architects. Not prompt crafters — that framing is too small. Context architects who understand what the agent needs to know about your system, your constraints, your history, and your business rules before it writes a single line of code. The teams I work with that have made this shift are seeing first-pass success rates above eighty percent. The teams that have not are hovering around thirty and blaming the model.

Your ROI measurement is probably wrong. If you are measuring “tools deployed” or “percentage of developers with Copilot licenses,” you are measuring inputs, not outcomes. Measure cycle time reduction. Measure first-pass success rate. Measure how often an agent-generated change ships without rework. Those numbers tell you whether your team has learned to communicate with the agent. The license count does not.

And be patient with the learning curve. I watch Lily get better every day. Not because she magically got smarter, but because we invested in clearer communication and better feedback loops instead of hoping she would figure it out. Your engineers are going through the same process. The ones who feel like the tools are broken are usually the ones who need better context-giving habits, not better tools. Be kind about that. Show them what good looks like instead of telling them to try harder.

The question is not whether AI agents transform how your team builds software. They will. The question is whether you will invest the time to give them clear context — or keep wondering why the plate ended up on the bathroom counter.