14 min read

Mike called me last Thursday. Mike is one of the best data engineers I know. He has been building streaming pipelines for a decade — the kind of engineer who speaks in backpressure metrics and watermark semantics the way most people talk about the weather.

Mike was working with Apache Flink. If you are not in the data engineering world, Flink is one of the premier frameworks for real-time data streaming. Serious companies run serious workloads on it. It has been in production at scale for years. Not some hobby project.

And Mike could not get an AI agent to maintain his Flink code.

Not because the agent was dumb. Not because Flink is bad. But because every time the agent tried to reason about the pipeline — modify a transformation, add a windowing function, debug a state management issue — it produced code that was subtly wrong. Not compiler-error wrong. Semantically wrong. The kind of wrong that passes tests and breaks in production at 2 AM when the event stream spikes.

Mike had tried everything. Better prompts. More context. Different models. RAG over the Flink docs. He spent three weeks on this. Three weeks of a senior data engineer’s time. At $95 an hour, that is roughly $11,400 in labor to fight a problem that was not a tooling problem, a model problem, or a framework problem.

And then he called me.

“I don’t think the agent is the problem. I think the code is the problem.”

The Question Nobody Is Asking

This is what Mike and I realized over the next two hours.

We have spent fifty years defining what “good code” means for human maintainers. Robert Martin’s Clean Code. The Gang of Four’s Design Patterns. Fowler’s Refactoring. Fred Brooks in 1975 explaining why adding people to a late project makes it later. Different decades, different problems, same underlying question: how do you write code that other humans can understand, modify, and extend without breaking things?

Those books shaped an entire profession. SOLID. DRY. Dependency injection. Code reviews. All of it built around one assumption: the next person to touch this code will be a human being.

That assumption is no longer safe.

The next person to touch your code might be an agent. Increasingly, it will be. And the question nobody is asking is this: is your code agent-maintainable?

Not clean by Uncle Bob’s standards. Not well-patterned by the Gang of Four’s catalog. Agent-maintainable. Can an AI agent — right now, with today’s models — reliably modify and extend your codebase without introducing subtle defects?

For most codebases, the answer is no.

This Is Not About Flink

Mike’s problem was not Flink. Flink is excellent software. The Flink team built something that handles real-time data processing at scale, and they did it well.

But Flink was designed for human engineers. Its API surface, its abstractions, its configuration model, its state management semantics — all of it was designed to be understood by experienced humans who have internalized the mental models of stream processing. Watermarks. Event time versus processing time. Keyed state versus operator state. These are concepts that take a human engineer months to develop deep intuition about.

An agent does not build intuition. It has the training data it was trained on and the context you give it. If the patterns in your code do not align with the patterns the agent has seen millions of times — patterns it has statistical confidence about — you get subtle errors. Not because the agent is stupid. Because it is operating outside its zone of reliable inference.

This is not a Flink problem. It is a codebase problem. And it is about to become yours.

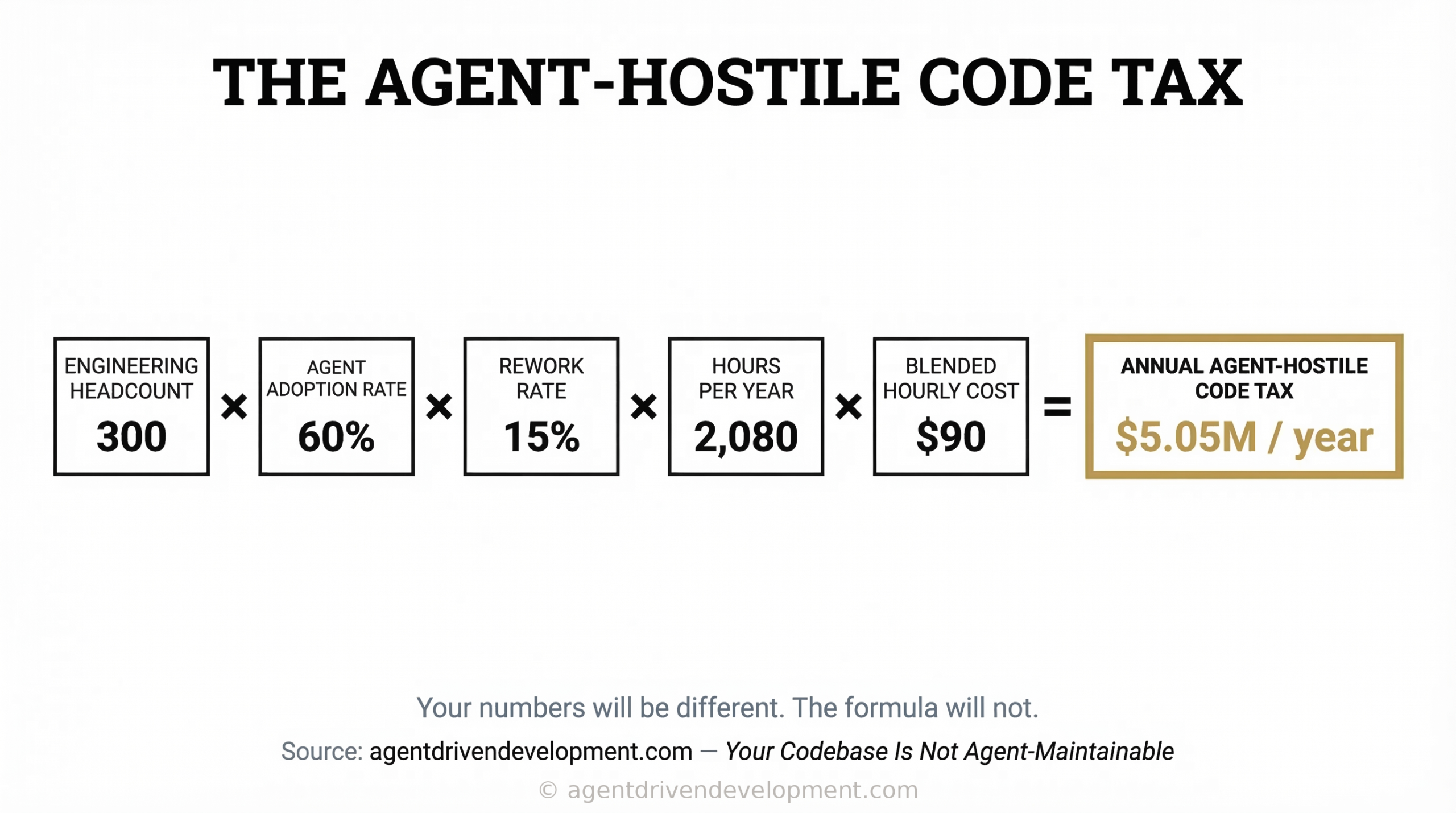

Take your engineering headcount. Multiply by your agent adoption rate — the percentage of engineers actively using AI agents for code changes. Multiply by the percentage of their agent-assisted time spent on rework — rewriting agent output, hand-holding agents through unfamiliar patterns, debugging subtle semantic errors that passed CI. Multiply by their loaded hourly cost.

For a 300-person engineering org at 60% agent adoption, 15% rework rate, and $90/hour loaded cost: that is 300 × 0.6 × 0.15 × 2,080 hours × $90 = roughly $5M a year in agent-hostile code tax. Your numbers will be different. The formula will not.

Industry data shows AI-generated code produces 1.7x more defects than human-written code. But that is an average across all codebases — agent-friendly and agent-hostile alike. In agent-hostile code, the multiplier is worse. In agent-maintainable code, it approaches 1:1. If you are spending $1.5M on Copilot Enterprise licenses and your code is not agent-maintainable, you are buying a tool that will underperform its benchmarks in your environment. Agent-maintainable code is not a separate initiative from your AI tooling investment. It is a prerequisite for getting ROI from it.

This is not only a modern software problem. There are 220 billion lines of COBOL in active production right now. They process 95% of US ATM transactions. They run grid management systems, insurance claims, and government benefits. The average COBOL developer is 55 years old. Ten percent of that workforce retires every year. The engineers who built those systems are not going to be around forever, and the engineers coming up behind them need agents that can help them maintain code they did not write. Those codebases are the most agent-hostile code on the planet. The agent-maintainability question is not just about Apache Flink and React. For critical infrastructure, it is existential.

The Biases Are the Feature

Every AI model has biases. Not the political kind. The statistical kind. Patterns it has seen so many times during training that it can reproduce them with extraordinary fidelity. Patterns where its confidence is highest and its error rate is lowest.

You know these patterns intuitively if you have spent any time with agents. Standard REST API structures. React components with hooks. Express middleware chains. Django views. Spring Boot services. PostgreSQL queries. These are not exotic technologies. They are the ten thousand most common patterns on GitHub. And agents are phenomenal at working with them.

Ask an agent to add a new endpoint to a well-structured Express API and it will do it correctly on the first try. A React component refactor following standard patterns? Nailed. A Flink pipeline with custom state management and checkpointing semantics? The agent will produce something that looks right but is not.

The difference is not intelligence. The difference is statistical exposure. The agent has seen ten million Express APIs and forty thousand Flink pipelines. Its confidence distribution is wildly different across those two surfaces.

You can use the model’s biases to your advantage.

Instead of fighting the agent’s statistical intuitions — instead of spending three weeks crafting prompts to overcome them — structure your codebase so the patterns the agent is most confident about are the patterns it encounters in your code.

That is not dumbing down your architecture. That is designing for a new kind of contributor.

The Clean Code Books Had the Right Idea for the Wrong Audience

Robert Martin did not invent good code. People wrote good code before Clean Code showed up in 2008. What Martin did was name the patterns. He gave the profession a shared vocabulary — single responsibility, open-closed, dependency inversion — and a way to evaluate code against a standard. The Gang of Four did something similar with Design Patterns in 1994. Martin Fowler cataloged the refactoring moves a few years later. Extract method. Inline variable. Replace conditional with polymorphism. A shared playbook.

Every one of those books was answering the same question: how do we make code that humans can maintain?

We need the same thing for agents. Someone needs to do for agent-maintainable code what Uncle Bob did for clean code — name the patterns, codify the principles, give teams a standard they can evaluate against.

That standard is not going to look like the old one.

What Agent-Maintainable Code Might Look Like

We are early. Nobody has a complete framework for this yet. But I have been building software with agents every day for over a year, and there are patterns that are becoming clear.

Conventional over clever. That custom monad transformer stack your functional programming enthusiast built? Elegant. Maybe even correct. The agent will butcher it every time. The boring, standard implementation that looks like every tutorial on the internet? The agent will maintain that flawlessly. “Clever” and “maintainable” always had tension. In an agent-maintained world, that tension resolves decisively.

Explicit over implicit. Agents cannot infer the design decisions you made at the whiteboard three years ago. They cannot divine that a particular function has a side effect that is load-bearing for billing. Everything that matters needs to be in the code — not in a Confluence doc, not in tribal knowledge. Types. Names. Contracts. Assertions. If the agent needs to know it to work safely, write it down where the agent will see it.

Small, self-contained units. The single responsibility principle, applied to a contributor that has a context window instead of long-term memory. The more a change requires understanding state outside the file the agent is editing, the higher the error rate. Small files. Clear boundaries. Minimal coupling. We have always said this. Now the defect rate proves it.

Standard toolchains. Webpack, Vite, Maven, Gradle, Cargo — massive representation in training data. The agent knows them cold. Your custom Makefile with forty targets and twelve environment variables? The agent is guessing. When agents guess about build configuration, things break in ways that are hard to trace.

Tests as contracts. In a human-maintained codebase, tests verify behavior. When agents are contributing code, tests become the contract — the mechanism by which your engineers verify the agent’s work before it ships. I wrote about how this changes testing strategy entirely. The testing square is not optional. It is the foundation.

What We Leave Behind

Some of the engineering investments your teams have made — investments that were correct when humans were the only maintainers — are now liabilities. Not because they were wrong. Because your teams now include agents, and agents maintain code differently than humans do.

BDD — Behavior Driven Development, Gherkin syntax, Cucumber scenarios — was designed to create a shared language between business stakeholders and developers. Agents do not need that translation layer. They need assertions they can evaluate programmatically. If your teams are heavily invested in BDD, evaluate whether that investment is now a tax.

Deep abstraction layers are a different kind of problem. Interfaces for interfaces, factories for factories, dependency injection containers that take a week to onboard a new hire — those were designed for human brains that can only hold seven things in working memory. Agents do not have that constraint. They have context windows. And every layer they traverse is another opportunity to lose the thread between intent and implementation.

Then there is metaprogramming. Runtime code generation. Heavy reflection. Convention-over-configuration frameworks that wire behavior through naming patterns. Brilliant for reducing human keystrokes. Nightmares for agents that need to trace a request from entry point to database and back. In regulated industries, the errors these produce are not bugs. They are audit findings.

And if you are maintaining COBOL, RPG, or SAP ABAP — code that predates the internet — you face an even sharper version of this problem. Those languages have the smallest footprint in any model’s training data. Agents working in those codebases are operating with the least statistical confidence and the highest error rates. The same principles apply — explicit over implicit, small self-contained units, tests as contracts — but the gap between where those codebases are and where they need to be is measured in decades, not sprints.

I am not saying burn it down. I am saying your architecture review process needs a new question: can the agent work here safely? If the answer is no, that module is a candidate for refactoring — not because the code is bad, but because your team now includes a new kind of contributor that reads code differently than the humans who wrote it.

The Refactoring Conversation You Need to Have

If you are a CTO or VP of Engineering, you need to have a new conversation with your team this quarter. Not about AI adoption. Not about which coding assistant to buy. About whether your codebase is ready to be maintained by agents.

Because here is what is coming. In two years — maybe less — the majority of code changes in production systems will be authored or co-authored by agents. That is not a prediction. That is the trajectory. If your codebase is hostile to agents, you will fall behind teams whose codebases are not. And “hostile to agents” does not mean bad code. It means code that was written for human-only teams — and your teams are not human-only anymore.

This is a refactoring effort. It is not a rewrite. You do not need to throw away your codebase. You need to make it legible to a new kind of reader. The same way you would refactor a codebase before onboarding a new team — except the new team has read every public repository on GitHub and has very strong opinions about how Express middleware should be structured.

Start with your highest-churn files. The ones that get modified most frequently. Those are the files agents will touch first and touch most often. Make them conventional. Make them explicit. Make them boring. Boring is the new beautiful when your contributor is an AI.

Test coverage matters differently now. Not “we should have tests” — that is the old argument. The new argument: if an agent modifies a file and there are no tests, nobody can verify the change without reading every line. Your engineers have judgment and institutional knowledge. The agent contributing alongside them has neither. Tests bridge that gap.

Your abstractions need scrutiny too. Are they earning their keep, or are they serving human ergonomics that the agent does not need? Every unnecessary abstraction is a tax on agent comprehension.

The Five-Question Assessment

Five questions. Ask them about any repository. The answers will tell you where your agent-maintainability problems live.

One. What percentage of your top-twenty highest-churn files use patterns that appear in fewer than ten thousand public repositories? This is the statistical exposure question. If your most-modified files use niche patterns, the agent is guessing every time it touches them. That is your highest-risk surface.

Two. How many changes require understanding state that lives in a different file, service, or system? Cross-boundary changes are where agents fail hardest. If a single modification requires the agent to reason about three services, a message queue, and a database trigger, the error rate goes through the roof.

Three. Can an agent run your build, execute your tests, and verify its own changes without human intervention? If the answer is no — if your build requires manual steps, environment-specific secrets, or tribal knowledge about which test suite to run — you do not have an agent-maintainable codebase. You have a human-dependent one.

Four. What is your agent iteration rate? When an agent makes a change, how many attempts does it take to produce something correct? If the answer is consistently more than two, the code is the problem, not the agent. Track this number. It is the leading indicator of agent-maintainability.

Five. Does your compliance and audit framework account for agent-authored code? If an agent modifies your payment processing logic and introduces a subtle semantic error — not a crash, not a test failure, a behavioral drift — can your audit trail identify that the change was agent-authored? Can your review process catch semantic errors that pass CI? If you are in financial services, this is a SOX finding. If you are in utilities, a NERC CIP violation carries fines up to $1M per day. If you are in healthcare, it is a HIPAA exposure. Regulatory frameworks were built around human-authored code with human-reviewed changes. Agent-authored code in agent-hostile codebases is a compliance gap that most audit teams have not even thought about yet.

These five questions will not give you a score. They will give you a map. The modules where the answers are worst are the modules you refactor first.

We Are Early. The Direction Is Clear.

There is no Clean Agent-Maintainable Code on your shelf yet. The principles above are a starting point, not a complete framework. Someone will write the book. It might be me. It might be you.

But the name is right. Agent-maintainable code. That is the standard your codebase will be evaluated against in 2027. Not clean code — that is table stakes. Not well-architected — that too. Agent-maintainable. And waiting to figure it out costs you velocity every sprint.

What Mike Did Next

After our call, Mike did something I did not expect. He did not switch models or write better prompts or try a different agent framework.

He refactored his Flink pipeline.

He replaced the custom state management with patterns that followed the most common Flink examples in the documentation. He made the windowing logic explicit where it had been implicit. He broke one large pipeline into smaller, self-contained stages. He added property-based tests at every stage boundary.

Then he pointed the same agent at the same codebase.

It worked. Not perfectly — we are not there yet. But before the refactor, the agent produced semantically correct code about one in four attempts. After, it was closer to three in four. The agent iteration rate — the number of attempts to get a correct change — dropped from an average of six to under two.

Run those numbers. At six iterations per change, Mike was spending roughly thirty minutes per modification getting the agent to produce correct code. At under two iterations, that dropped to about eight minutes. Across the twenty to thirty changes per week Mike makes to that pipeline, that is a shift from fifteen hours of agent-wrangling per week to four. Eleven hours reclaimed. At $95 an hour, that is over $1,000 a week — $54,000 a year — on one pipeline, for one engineer. The refactoring took him four days. It paid for itself in the first week.

The same agent. The same model. The same Flink framework. Different code structure.

The agent was never the problem. The code was.

Robert Martin published Clean Code in 2008. Before that book, people wrote clean code. After that book, people had a standard. A name for what they were doing. A way to teach it and evaluate it and hold each other accountable to it.

We are in the “before” moment right now. People are writing agent-maintainable code. They just do not have a name for it yet.

Now they do.

Your team has a new member. You did not hire it. You did not onboard it. But it is writing code in your repositories right now, and it has opinions about how your code should be structured.

The question is whether you will listen.