14 min read

The ballroom at the local hotel smelled like reheated salmon and ambition. Two hundred people in business casual, half of them checking email under the table, the other half pretending to pay attention to the CIO’s remarks about “the long arc of organizational change.”

They were giving out the service awards.

Karen from Finance got her ten-year plaque. Polite applause. Dave from Infrastructure got his twenty. Louder applause, a few whistles from the back, someone yelled “you’re still here?” and the room laughed. And then the CIO adjusted the microphone and said something about a person who had been “instrumental in our Agile journey from the very beginning.”

The Vice President of Agile Transformation walked to the stage. Fifteen-year crystal clock. Standing ovation. The CIO talked about vision, about persistence, about the courage to drive change in a large organization. The VP said a few words about the teams, the coaches, the “incredible progress we have made together.” The room clapped. The salmon got cold.

You were at that table. You clapped too. You might have been the one who nominated them for the award. And then you did the math.

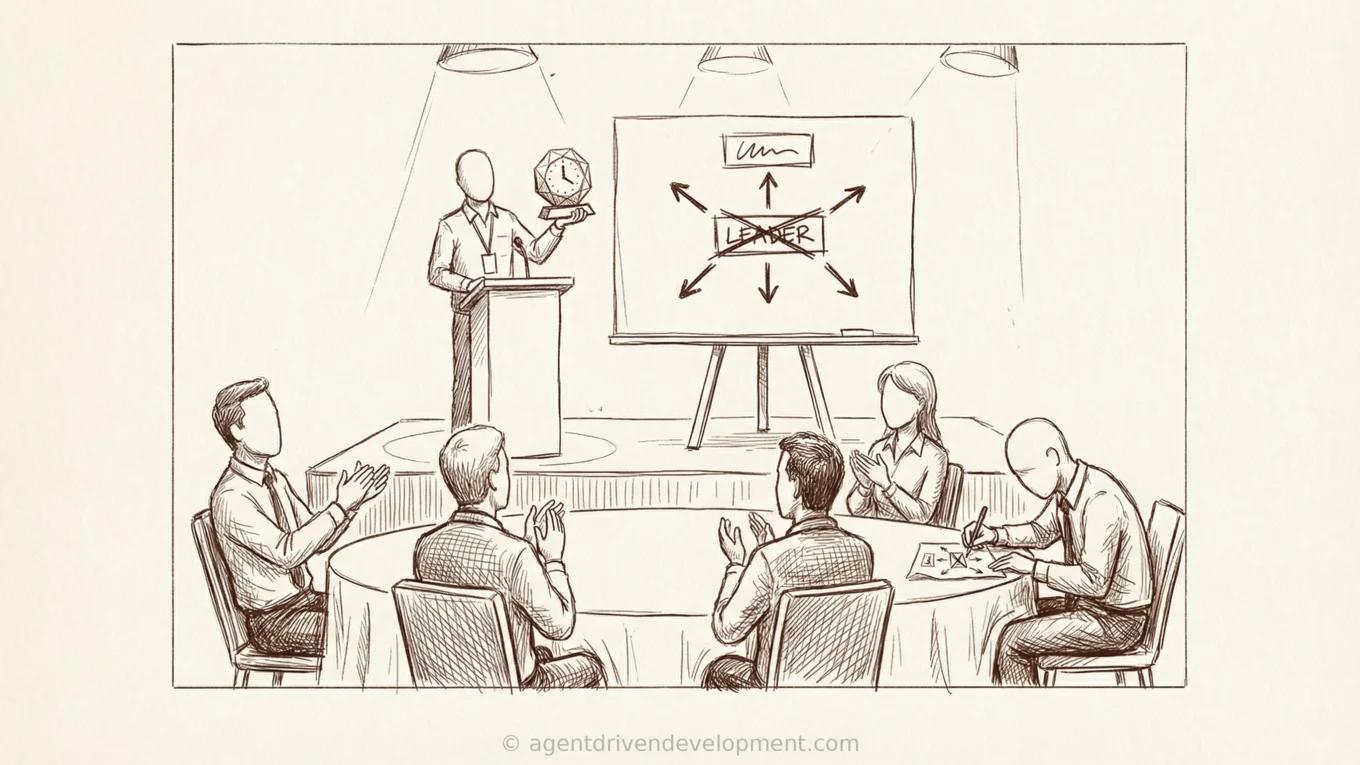

This person was hired in 2011. You brought in coaches. You built a Center of Excellence. You had a charter, a budget, a slide with a box in the center and arrows pointing outward (the box was always the same shade of corporate blue). Fifteen years later, that VP is holding a crystal clock, and your organization still cannot release software without a change advisory board meeting and a three-week regression cycle.

I am not making fun of this person. The VP did the job they were asked to do. But when you look at what actually changed in fifteen years, you can only find two things: another layer of bureaucracy, and an Agile coach assigned to every team who mostly attends standups and asks “what are your blockers?” Not faster delivery. Not better software. A new layer of meetings and a person in every room whose job is to make sure the meetings follow the right format.

The problem is the organizational pattern that created the job, funded it for fifteen years, and then gave it a crystal clock instead of asking the obvious question: if the transformation was going to work this way, would it not have worked by now?

I keep thinking about that clock because I am watching the same organizations build AI teams. Same box. Same arrows. Same eight people. And I want to ask you, before you hire the Head of AI and give them a charter and a slide, whether you are sure you want to buy another crystal clock in 2041.

Here Is What Happens Next

I have seen this movie. I can tell you the ending.

Most readers also read: Stop Reviewing Code. Start Proving It Works. My Take on AI in the Quality Process of Software.

You hire a Head of AI. Smart person. 90-day plan. Governance framework, tool evaluation, pilot with two volunteer teams. The slide has a box in the center. By Q1 2027, the team is eight people. Eleven vendors evaluated. A 40-page responsible AI policy. Four proof of concepts, none in production. The steering committee is impressed by the demos. The rest of the organization is still waiting for access.

By Q3 2027, the team pivots to enablement. Training sessions. Internal wiki. An AI Champions program where one person from each team attends a monthly meeting and reports back. The Champions attend the meetings. They do not build agents. They attend meetings about building agents.

By Q3 2028, you have AI coaches on every team and no AI. A Slack channel with 200 members and four posts per month, three of which are links to articles about what other companies are doing. $6 million spent over two years (team salaries, vendor licenses, infrastructure, the conferences). Two agents in production, one of which was built by someone who bypassed the AI team entirely.

Meanwhile, across the street, a mid-size manufacturer the same size as yours gave everyone AI access on day one, wrote a one-page acceptable use policy, and told their people to automate their own work. Within six months they had over 30 agents in production, most built by the people who do the work.

AI is not a practice you coach. It is a competency. It lives in every person’s hands or it does not exist at all.

The Arguments Are the Same Arguments

I have asked CIOs and CTOs at a dozen organizations this year why they created a centralized AI team. The answers are the same three arguments that justified the Agile CoE in 2011.

“Because AI requires specialized skills.” This is the “Agile requires trained Scrum Masters” argument wearing a new hat. Building an AI capability in 2022 required ML engineers, training pipelines, custom models, and serious infrastructure. That was a real constraint. It is no longer a real constraint. Foundation models changed the economics entirely. Building an agent in April 2026 requires the ability to describe what you want done, evaluate whether the output is correct, and refine until it is. Your supply chain analyst has that skill. Your compliance officer has that skill. The barrier is not expertise. It has not been expertise for over a year.

“Because we need governance.” Imagine it is 1995. Email is new to your organization. Instead of giving everyone an account and a one-page guide on appropriate use, your CIO creates the Email Center of Excellence. Eight people. A charter: “Drive email adoption across the enterprise.” The Email Team reviews every outgoing email for compliance with the Email Governance Framework (14 pages, updated quarterly). You want to send a message to a client? Submit a request. The Email Team will evaluate whether email is the appropriate channel, review your subject line for brand alignment, and confirm your font conforms to the corporate email style guide. Estimated turnaround: five business days.

How many hours did you devote to email training? Was it years? Did you build an email dojo?

No. You gave them the tool, you gave them a few sensible rules, and you trusted them to figure it out. Because they are adults who understand their own work.

AI governance can work the same way. A one-page framework (what data can the agent access, who reviews the output, what happens when it is wrong) gives every team what they need to build responsibly. A 40-page governance document produced by a team that has never built an agent gives you paralysis.

“Because we need to coordinate.” Coordinate what? This was the argument for keeping the Agile CoE alive in year five, and year eight, and year twelve. The coordination never produced coordination. It produced meetings, status reports, and maturity assessments. The teams that actually became Agile did it themselves, usually in spite of the CoE. If every team is building agents for their own work, within a governance framework, using tools they have access to, what requires centralized coordination? You did not centralize DevOps forever. You eventually embedded it into every team. You are going to do the same thing with AI. The question is whether you do it now or waste eighteen months arriving at the same conclusion.

Meanwhile, the People Who Are Not on the AI Team

While the AI team reviews markdown and updates vendor matrices, individual people are building agents that solve real problems. Not everywhere, but in enough places that I have stopped treating it as an anomaly.

My brother-in-law is a nurse. No engineering background. No computer science. He started building proof-of-concept agents for better patient outcomes on his own time, describing clinical workflows to an AI the way he would explain them to a new nurse on his unit. Medication reconciliation edge cases, shift handoff patterns, the specific way his hospital’s EHR buries the information that matters. Nobody asked him to do this. Nobody from the hospital’s AI team (yes, they have one) gave him permission. He just saw a tool that could do what he spends half his shift doing manually, and he described the work.

I talked to a director at a mid-size manufacturer last month. He has four teams. Two of them are high performers. Two of them are not, and he has been managing around that gap for years, shielding them from the consequences while avoiding the honest conversations about performance that he should have had a long time ago. He told me, with the kind of calm that comes from having already done the math, that his two strong teams plus agents can now absorb the workload of all four. That means he finally has to have the conversations he has been avoiding: who gets reskilled, who gets redeployed, and who needs a different role. He is not pretending that is easy. But he is also not pretending that carrying two underperforming teams for another three years is responsible leadership when the capability exists to redistribute the work. His company’s AI team does not know he is thinking this way. They are still finalizing their Q2 tool evaluation report.

I watched an operations manager at a utility (I will call her Diane) build an agent that monitors equipment maintenance logs and generates exception reports when sensor readings deviate from historical baselines. Diane is not a developer. She described the process the way she would explain it to someone shadowing her, then spent most of three days refining how the agent handled the difference between a genuine anomaly and a seasonal pattern. It runs in production now. Her team gets the exception report every morning instead of spending half a day pulling it manually. The AI team does not know she built it.

These are not exceptional people. They are a nurse, a director, and an operations manager who understand their own work deeply enough to describe it clearly. That is all it takes now. Every person in your organization who can train a new hire can build an agent. And right now, most of them are waiting for the AI team to give them permission.

The Math

A centralized AI team of eight people, fully loaded (salary, benefits, tools, vendor licenses, the conferences they attend to “stay current”), costs roughly $1.8 to $2.2 million per year. Across the twelve organizations I assessed this year, centralized AI teams deployed an average of roughly one agent to production in their first twelve months. Some deployed zero. The best deployed two. Even if you credit half the team’s cost to governance, enablement, and vendor evaluation (which is generous, but let us be generous), you are still paying a million dollars per production agent.

Now consider the alternative. Give every team access to AI tools. Budget $500 per person per year for licenses. Allocate four hours per week for experimentation. Provide a one-page governance framework. For a 200-person organization, you are looking at roughly $100K in tooling, plus the opportunity cost of the experimentation time. Four hours a week across 200 people at a fully loaded rate of $75 per hour is roughly $3 million a year. That is real money. I am not going to hide it behind a footnote. But most of those hours are currently spent on the manual work the agents replace. If even 20 percent of the experiments produce a working agent that saves two hours a week per person, the math breaks even inside six months. In my experience, the number that succeed is higher than 20 percent, and the time saved per agent is higher than two hours.

The organizations I work with that take this distributed approach see 15 to 30 agents deployed in the first six months. Not all of them survive. But the ones that do were built by the people who need them, for the work those people already understand, and they stay in production because the person who built it is the person who maintains it. No handoff. No backlog. No dependency on a team across the hall.

Even on the most conservative math, a million dollars per production agent versus $100K and some recaptured time for fifteen is not a close call. The Agile CoE had the same economics problem. You paid for eight coaches for fifteen years. How much Agile did you get?

What You Actually Need

The people who solved this problem already solved it. Farley, Kim, Humble, Finster — the engineers who built the Continuous Delivery movement and proved that anyone can deploy safely if the pipeline enforces the rules. The answer to “how do you let everyone move fast without breaking everything” was not “create a team to do it for them.” Build trust into the pipeline. Automate compliance. Make governance a thing the system enforces, not a thing a team reviews.

-

Give every team access to AI tools. Not a pilot. Not a phased rollout. Access. If you are in a regulated industry — healthcare, financial services, energy — “access” means access within the guardrails your compliance team already knows how to build. A HIPAA-scoped environment, a FedRAMP boundary, a data classification tier. The point is not “no rules.” The point is that the rules should be infrastructure, not a committee. The cost is trivial relative to the centralized team.

-

Build the governance into the pipeline. What data can agents access? Enforce it at the infrastructure level. What outputs require human review? Build that gate into the workflow. What happens when an agent produces something wrong? Define the circuit breaker and automate it. You do not make deployments safe by having a committee review every release. You build automated tests, security scans, and rollback mechanisms into the pipeline so anyone can deploy with confidence.

-

Allocate four hours per week per team for experimentation. If you do not protect the time, it will not happen, because everyone’s calendar is already full of the work they are doing the old way.

-

Turn your AI team into the people who build and maintain the governance pipeline, not the people who review everyone else’s work. Measure them on how many agents other teams deployed safely, not on how many documents they produced.

-

Share what works, kill what does not. “I built this, it does this, here is how.” Let successes be visible. Let failures be quiet. The organization will pattern-match toward what works.

This Is a Core Capability. Why Are You Outsourcing It?

There is a question underneath all of this that I have not said directly yet, so let me say it now: what is the end state?

Not “what is the roadmap.” Not “what is the vision.” What does your organization look like when AI capability is fully absorbed? Because if you cannot describe that end state, you are not transforming. You are just spending.

The end state is not an AI team. The end state is an organization where building and using agents is as ordinary as writing an email or opening a spreadsheet. Where the supply chain group builds their own reconciliation agents and the compliance team builds their own audit agents and nobody files a request to get permission. Where AI is not a special initiative. It is how work gets done.

To get there, you need three things that a Center of Excellence will never give you.

First, define the end-state org design. Not where you are. Where you are going. What does the engineering organization look like when every team has agent capability? What happens to the coordination layers that exist only because humans were slow? What roles change, what roles disappear, what new roles emerge? If you have not drawn that picture, you are navigating without a destination, and every mile you drive is a guess.

Second, enable your leaders to get you there. Not your engineers. Your leaders. The VPs and directors who control the calendars, the budgets, the governance models. If they do not understand what agent-driven development looks like in production (not in a demo, not in a slide, in production), they will keep approving structures that block it. Your leaders stopped building years ago, and now they are making architectural decisions about a technology they have never used. (That is why vendors own your AI strategy.) Get them in a room. Show them what the work looks like. Not a workshop, not a webinar. Two hours where they see real agents solving real problems and then have the hard conversation about what needs to change.

Third, teach the capability. Not “enable” it. Not “champion” it. Teach it. The way you teach any core competency that the organization cannot function without. You did not outsource learning to write code. You did not outsource learning to use version control. You did not create a Git Center of Excellence with eight people and arrows on a slide. You taught people the skill and you expected them to use it. AI is the same. It is a core capability, and outsourcing it to a team across the hall is the same as outsourcing your ability to write software. You would never do that. The tool does not matter. (Your organization does.)

The Question

AI is a competency. It is not a product, it is not a platform, and it is not a department. You do not have a critical thinking team. You do not have an email team. You stopped having an Agile CoE (or did you? is someone getting a twenty-year clock in 2031?).

The technology you are trying to scale through a centralized team is the same technology that makes centralized teams unnecessary. Putting it in a team and expecting it to radiate outward is like putting literacy in a department and expecting the rest of the organization to learn to read by proximity.

Your people could all be building agents right now. A nurse is doing it on his own time. A director is doing it to redistribute work and finally have the conversations he has been avoiding. Diane did it in three days. They did not need a Center of Excellence. They needed access, a few sensible rules, and the trust that comes from being treated like professionals who understand their own work.

You have seen this movie. You know the ending. Or you could skip the eighteen months, skip the crystal clock, and give your people the tools, the permission, and the trust to build agents for their own work.

How did that work out last time?