10 min read

Every executive I sit down with wants to talk about AI tools. Which one is fastest, which one benchmarks best, which one their competitor just adopted. I keep steering the conversation somewhere they do not expect, and it starts with a question I learned at a logistics company in Indianapolis when I was 23 years old, driving a forklift and writing PHP in the same shift. The question has nothing to do with software. It has everything to do with why your AI investment is underperforming.

Most readers also read: Stop Reviewing Code. Start Proving It Works. My Take on AI in the Quality Process of Software.

Indianapolis, 2010

I graduated college around 2008 and moved to Indianapolis to work at a small logistics company as a programmer. If you have never worked at a small logistics company, let me paint the picture for you.

You are not just a developer. You are the developer who also runs second shift because someone has to, who drives the forklift because Marcus called in sick and the truck is already at the dock, who somehow knows the difference between LTL and FTL freight (Less Than Truckload and Full Truckload, for those of you who were lucky enough to never learn this), what a bill of lading is, and why the driver just refused your dock appointment.

You learn way too much about logistics. Freight hauling, carrier rates, last-mile delivery windows, the entire unglamorous machinery that moves physical things from one place to another. And somewhere in there, between writing PHP and shrink-wrapping pallets, you develop an intuition about transportation that sticks with you permanently.

Fifteen years later, I am sitting across from CTOs and VPs of Engineering who are trying to figure out which AI tool will transform their software development lifecycle, and I keep asking them the same question I learned on that loading dock. They do not expect a logistics question. But it is the right one.

The Last-Mile Question

In logistics, there is a deceptively simple question that determines everything: you are the last-mile carrier, you are running the final leg of the delivery, what type of transportation do you need?

In Indianapolis, the answer depends on what you are carrying. If you are delivering food, probably a moped. If you are delivering freight, a 53-foot semi. If you are delivering important legal documents across town in thirty minutes, maybe a Porsche.

The fastest form of ground transportation depends on the cargo. That part is obvious. It is the same logic executives use when they evaluate AI coding tools: what am I trying to carry? Code generation, test automation, documentation, refactoring? Pick the tool that matches the cargo.

But that logic is incomplete. It also depends on where you are operating.

Marseille, France

On my honeymoon, we rented a car in the south of France. The rental company handed us the keys to a Citroën C4 Cactus. If you have not seen one, look it up. It is a real car. It is small (even by European standards), and it has these puffy air-bump panels on the doors like it is wearing a life jacket. The entire vehicle looks like it was designed by someone who expected you to hit things.

This turned out to be prescient.

Marseille is one of the oldest cities in France, founded around 600 BC by Greek sailors. The streets in the old city were laid out for people. Maybe donkeys. Maybe a horse if you were feeling ambitious. They were not designed for cars, and they were certainly not designed for the tiny, life-jacketed Citroën C4 Cactus.

The streets curve without warning, narrow until two walls are brushing your mirrors, dead-end into staircases, and angle at intersections that make no geometric sense. They emerged over centuries from foot traffic and stone walls and the simple human desire to get from the market to the harbor without walking uphill.

I drove the Cactus into a house. Not at speed, not dramatically, just a gentle, inevitable crunch as I tried to navigate a corner that was not designed for anything with four wheels. The air bumps absorbed most of it. The house did not seem to notice.

Indianapolis is a grid. You can navigate it in a semi truck with your eyes half closed because the streets were designed with logic, with intention, with the assumption that vehicles would use them. Marseille’s streets were designed a thousand years before the internal combustion engine existed.

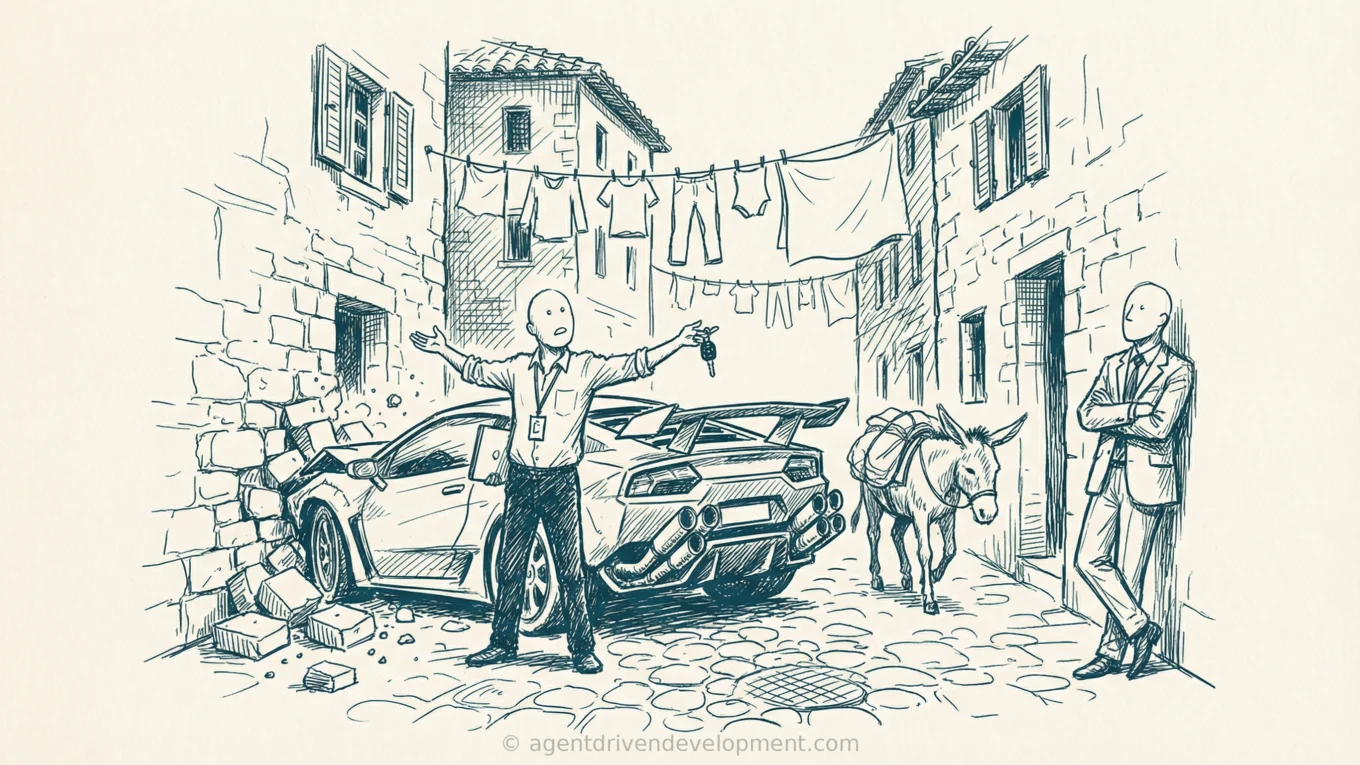

Now ask the last-mile question again: what is the fastest form of ground transportation in Marseille? It is not a 53-foot semi. It is not a Porsche. The streets do not care how fast your vehicle can go because the streets were not designed for vehicles at all.

You might honestly need a helicopter. Ground transportation, no matter how advanced or powerful or expensive, cannot overcome streets that were never built for it.

Your Organization Is Marseille

The tool is a commodity. The organizational adoption expertise is not. And every executive conversation I have starts with the wrong question: which tool is the Porsche?

You are standing in Marseille, a city with streets laid out over centuries by people who never imagined your vehicle, and you are asking which car has the best zero-to-sixty.

I recently worked with a company where a change took 34 days from idea to production. The AI tool generated the code in about four hours. The remaining 33 days were approvals, reviews, environment provisioning, a security scan queue, and two rounds of manual QA. They had optimized 0.5% of their cycle time and left 97% untouched.

And this is not just an engineering problem. Your marketing team’s content approval chain has the same disease — a campaign that an AI tool can draft in an afternoon sits in brand review for eleven business days, then legal for another eight. Your customer service operation has a knowledge base that requires four sign-offs to update, so your agents are reading answers that were written before the product shipped its last three releases.

Those are all streets. Different departments, different vehicles, same underlying constraint.

Not All Gates Are Scar Tissue

I need to be careful here, because “fix your streets” is not the same thing as “tear everything down.”

Some of your gates are load-bearing. If you are in healthcare, your clinical validation process exists because patients die when it fails. If you are in financial services, your SOX controls exist because regulators will shut you down. If you are running critical infrastructure, your change management exists because the lights go off.

I have found that about 40% of gates in a typical enterprise approval chain are genuinely load-bearing. They catch real problems. You redesign those to be faster, but you do not remove them. The other 60% are scar tissue — someone got burned, someone added a gate, the incident was resolved five years ago, the gate remained. Nobody remembers why it is there. Nobody wants to be the person who removes it.

Knowing the difference is the entire job.

You Are Measuring the Wrong Things

I sat in a meeting last quarter where a VP of Engineering told me his team had just finished a six-month tool evaluation. They surveyed 200 engineers, ran satisfaction scores, measured developer experience across four platforms, and chose the one that scored highest on happiness.

I asked him one question: how long does it take a change to get from your best engineer’s laptop to production?

He did not know.

His developers were happy with the tool. They were also shipping once every three weeks because the deployment pipeline had eleven gates, two of them manual, one of which required a committee that met on alternating Tuesdays. The tool was generating code faster than the organization could absorb it, and nobody had noticed because they were measuring the wrong thing.

Developer happiness with a specific AI tool is measuring how much your team enjoys driving the Porsche around the parking lot while the streets remain impassable. And it is not the only measurement problem I see.

A CTO walks into a board meeting and presents DORA metrics (Deployment Frequency, Lead Time for Changes, Change Failure Rate, Mean Time to Recovery). The engineering team is proud. The numbers are up and to the right. The CFO looks at the slide and asks one question: what did that do to our margin?

Silence.

DORA metrics measure how well your engineering organization moves code. They do not measure whether that code generated revenue, reduced cost, improved customer retention, or expanded margin. If your AI adoption strategy is oriented around improving DORA scores, you are presenting engine RPM numbers to someone who asked about fuel economy.

The organizations that get AI adoption right do not report “we deployed 40% more frequently.” They report “we reduced time-to-market on new product features by 60%, which contributed to a 12% increase in net revenue retention” or “we cut our cost per feature by half, which improved gross margin by 200 basis points.” Same data, different framing. One of them gets your budget renewed. The other gets a polite nod and a follow-up email asking what it actually means for the business.

If your AI adoption goals are not framed around margin, revenue, cost reduction, or customer outcomes, you are building a dashboard that impresses engineers and confuses everyone else. That is not a technology problem. That is a leadership alignment problem.

The Cost of Not Fixing Your Streets

Let me put a number on this because “fix your streets” without a dollar amount is just philosophy.

If your engineering organization has 200 people at a fully loaded cost of $200,000 per engineer per year (salary, benefits, tooling, overhead), you are spending $40 million a year on engineering. If 60% of your cycle time is wait time in approval queues and handoffs (and that 60% is conservative based on what I have measured), that is $24 million a year in wages spent waiting.

Your competitor who fixed their streets six months ago is spending the same payroll on two to three times the output. That gap compounds every quarter. In 18 months, the difference is not 20% or 30%, it is multiples. The compounding is the part that boards underestimate.

How to Start: Four Questions

I am not going to hand you a 90-day roadmap because those are fiction. But I will give you the four questions I ask in every engagement, and they work whether you are a CTO, a COO, a CMO, or a CPO. The streets problem does not belong to engineering. It belongs to whoever owns the process.

0. What is the business outcome? Before you map a single workflow, answer this: what business result is this AI investment supposed to produce? Not an engineering metric. Not DORA scores. Not developer satisfaction. A business outcome that your CFO can put in a board deck. Revenue growth, margin improvement, cost reduction, customer retention, time-to-market compression. If you cannot articulate the business outcome in one sentence, you are not ready to evaluate tools, redesign streets, or spend a dollar. Everything that follows depends on this answer, because this is how you will know whether any of it worked.

1. Map the wait. Pick one workflow that matters to that business outcome. Map every step from idea to live. Mark which steps are work and which steps are waiting. In my experience the ratio is usually 15-20% work, 80-85% waiting. If your number is better than that, congratulations. If it is not, you now know where the problem is.

2. Sort your gates. For every approval, review, or handoff in that workflow, ask: what does this gate catch? If nobody can name a specific incident in the last two years, it is probably scar tissue. Could this gate be automated, parallelized, or reduced from a committee to a single owner? Most gates that were designed for a 5-person review can be handled by one person with the right context and a clear escalation path.

3. Ask who benefits from the current design. This is the uncomfortable one. Some gates exist because the people who operate them derive authority, headcount, or budget from their existence. Removing the gate threatens someone’s role. That is not cynical, that is organizational physics. You cannot fix the streets without understanding who built their house on the current road. Those people need a role in the new design, not a pink slip.

That third question is the one most consultants skip. It is also the one that determines whether the redesign sticks. Because streets are not just process, they are people. The 51-year-old engineer who has run that approval gate for 15 years is not an obstacle. He is a subject matter expert who knows why the gate was built. Put him on the redesign team. He will tell you which gates are load-bearing faster than any audit will. I wrote about this in more detail in The Engineers Who Can’t Use AI Agents Don’t Have a Tools Problem, and the pattern is the same every time.

The Last-Mile Question for Your Organization

You are the last-mile carrier. You are trying to deliver working software, faster products, better customer outcomes, with AI tools that did not exist two years ago. What do your streets look like? Were they designed with intention, for the vehicles that will use them? Or were they designed a thousand years ago, by people who could not have imagined what you are trying to drive?

Because if you do not know the answer to that question, it does not matter what you buy. You are going to end up like me in Marseille, gently crunching into a 400-year-old wall in the most advanced vehicle the rental company had to offer, wondering why the car did not save you.

Pick a partner who will help you fix your streets, not a vendor who will sell you a faster car for roads that cannot support it. What happens to your organization if you do not?